GENEA Challenge 2023

★ Latest news ★

The GENEA team is preparing an online leaderboard for benchmarking gesture generation models with human evaluation. This project is the evolution of the GENEA challenge, so stay tuned!If you would like to stay updated on major developments in the leaderboard project, sign up with your e-mail address on this link!

Challenge results now available! Results and materials from the Challenge can be found on the main GENEA Challenge 2023 results page.

The GENEA Challenge 2023 on speech-driven gesture generation aims to bring together researchers that use different methods for non-verbal-behaviour generation and evaluation, and hopes to stimulate the discussions on how to improve both the generation methods and the evaluation of the results.

This will be the third installment of the GENEA Challenge. You can read more about the previous GENEA Challenge here .

This challenge is supported Wallenberg AI, Autonomous Systems and Software Program ( WASP ) funded by the Knut and Alice Wallenberg Foundation with in-kind contribution from the Electronic Arts (EA) R&D department, SEED .

Important dates

GENEA Challenge programme

All times in Paris' local timezone (UTC+2)

Challenge papers

The FineMotion entry to the GENEA Challenge 2023: DeepPhase for conversational gestures generation

Vladislav Korzun, Anna Beloborodova, Arkady Ilin [OpenReview]

Gesture Motion Graphs for Few-Shot Speech-Driven Gesture Reenactment

Zeyu Zhao, Nan Gao, Zhi Zeng, Guixuan Zhang, Jie Liu, Shuwu Zhang [OpenReview]

Diffusion-based co-speech gesture generation using joint text and audio representation

Anna Deichler, Shivam Mehta, Simon Alexanderson, Jonas Beskow [OpenReview]

The UEA Digital Humans entry to the GENEA Challenge 2023

Jonathan Windle, Iain Matthews, Ben Milner, Sarah Taylor [OpenReview]

FEIN-Z: Autoregressive Behavior Cloning for Speech-Driven Gesture Generation

Leon Harz, Hendric Voß, Stefan Kopp [OpenReview]

The DiffuseStyleGesture+ entry to the GENEA Challenge 2023

Sicheng Yang, Haiwei Xue, Zhensong Zhang, Minglei Li, Zhiyong Wu, Xiaofei Wu, Songcen Xu, Zonghong Dai [OpenReview]

Discrete Diffusion for Co-Speech Gesture Synthesis

Ankur Chemburkar, Shuhong Lu, Andrew Feng [OpenReview]

The KCL-SAIR team's entry to the GENEA Challenge 2023 Exploring Role-based Gesture Generation in Dyadic Interactions: Listener vs. Speaker

Viktor Schmuck, Nguyen Tan Viet Tuyen, Oya Celiktutan [OpenReview]

Gesture Generation with Diffusion Models Aided by Speech Activity Information

Rodolfo Luis Tonoli, Leonardo Boulitreau de Menezes Martins Marques, Lucas Hideki Ueda, Paula Paro Dornhofer Costa [OpenReview]

Co-Speech Gesture Generation via Audio and Text Feature Engineering

Geunmo Kim, Jaewoong Yoo, Hyedong Jung [OpenReview]

DiffuGesture: Generating Human Gesture From Two-person Dialogue With Diffusion Models

Weiyu Zhao, Liangxiao Hu, Shengping Zhang [OpenReview]

The KU-ISPL entry to the GENEA Challenge 2023-A Diffusion Model for Co-speech Gesture generation

Gwantae Kim, Yuanming Li, Hanseok Ko [OpenReview]Call for participation

The state of the art in co-speech gesture generation is difficult to assess since every research group tends to use its own data, embodiment, and evaluation methodology. To better understand and compare methods for gesture generation and evaluation, we are continuing the GENEA (Generation and Evaluation of Non-verbal Behaviour for Embodied Agents) Challenge, wherein different gesture-generation approaches are evaluated side by side in a large user study. This 2023 challenge is a Multimodal Grand Challenge for ICMI 2023 and is a follow-up to the first edition of the GENEA Challenge, arranged in 2020.

We invite researchers in academia and industry working on any form of corpus-based generation of gesticulation and non-verbal behaviour to submit entries to the challenge, whether their method is driven by rule or machine learning. Participants are provided a large, common dataset of speech (audio+aligned text transcriptions) and 3D motion to develop their systems, and then use these systems to generate motion on given test inputs. The generated motion clips are rendered onto a common virtual agent and evaluated for aspects such as motion quality and appropriateness in a large-scale crowdsourced user study.

The results of the challenge are presented in hybrid format at the 4th GENEA Workshop at ICMI 2023, together with individual papers describing each participating system. All accepted challenge papers will be published in the main ACM ICMI 2023 proceedings.

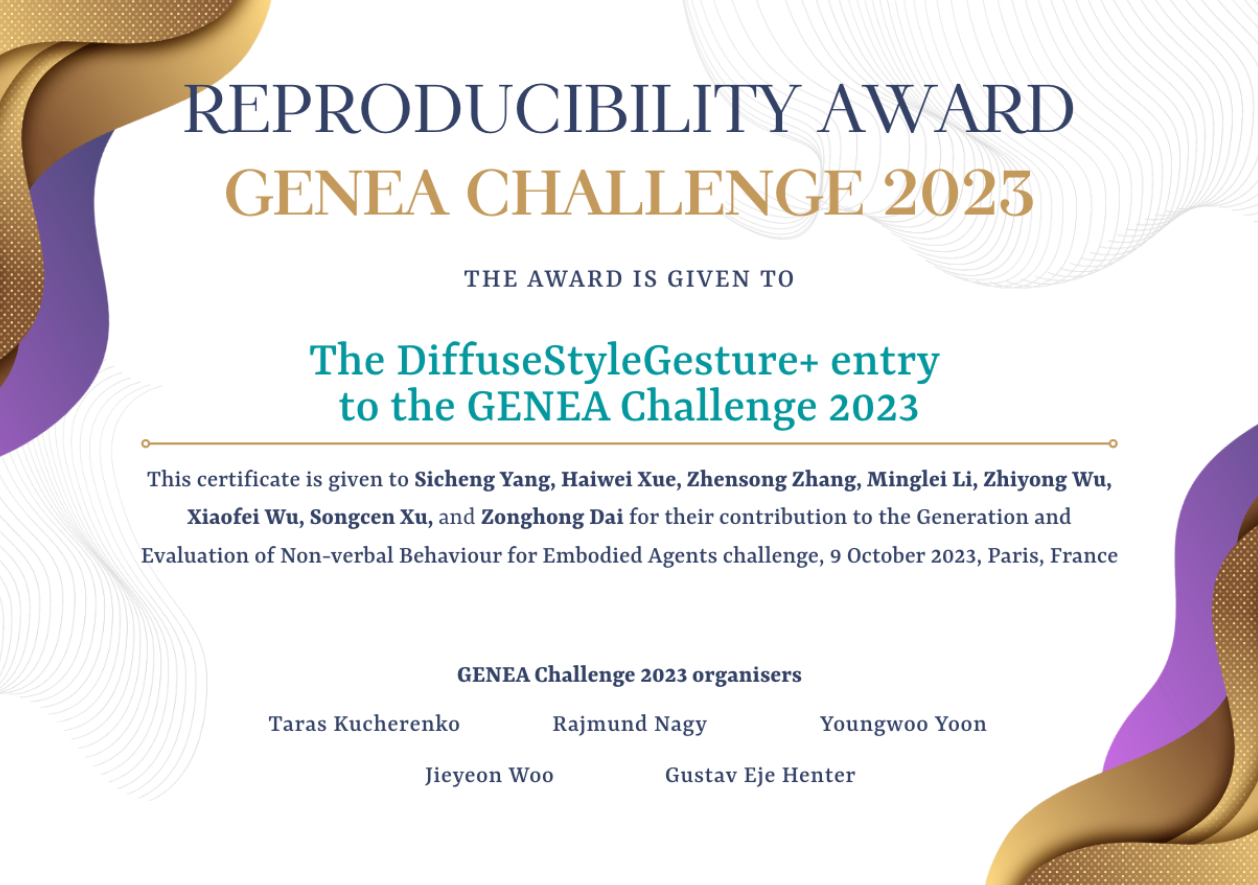

Reproducibility Award

Reproducibility is a cornerstone of the scientific method. Lack of reproducibility is a serious issue in contemporary research which we want to address at our workshop. To encourage authors to make their papers reproducible, and to reward the effort that reproducibility requires, we are introducing the GENEA Workshop Reproducibility Award. All short and long papers presented at the GENEA Workshop will be eligible for this award. Please note that it is the camera-ready version of the paper which will be evaluated for the reward.The award is awarded to the paper with the greatest degree of reproducibility. The assessment criteria include:

- ease of reproduction (ideal: just works, if there is code - it is well documented and we can run it)

- extent (ideal: all results can be verified)

- data accessibility (ideal: all data used is publicly available)

by Sicheng Yang, Haiwei Xue, Zhensong Zhang, Minglei Li, Zhiyong Wu, Xiaofei Wu, Songcen Xu, Zonghong Dai.

Organising committee

The main contact address of the workshop is: genea-challenge@googlegroups.com.

Challenge organisers